Prepare Your Data Strategy for the Shift to Personalization

Prepare Your Data Strategy for the Shift to Personalization

Recently, the FIAP Awards kicked off for four days of talks, Q&As and insights on advertising and communications.

If you’re not familiar with FIAP (Festival Iberoamericano de Publicidad), it’s one of the leading creative events in Latin America, hosted in Buenos Aires and home to one of our offices. This year’s edition featured several key speakers including Sir Martin Sorrell of S4 Capital and MediaMonks founder Wesley ter Haar.

One of the recurring themes throughout the conference was the challenges brands face in using data effectively, creatively affordably. If this sounds relatable, get up to speed on these big ideas surrounding the opportunities that data affords.

Budgets are tight, and the appetite for content isn’t slowing down.

“Nowadays you need more for less. There’s a constant need for content, but budgets are getting smaller.” – Wesley ter Haar

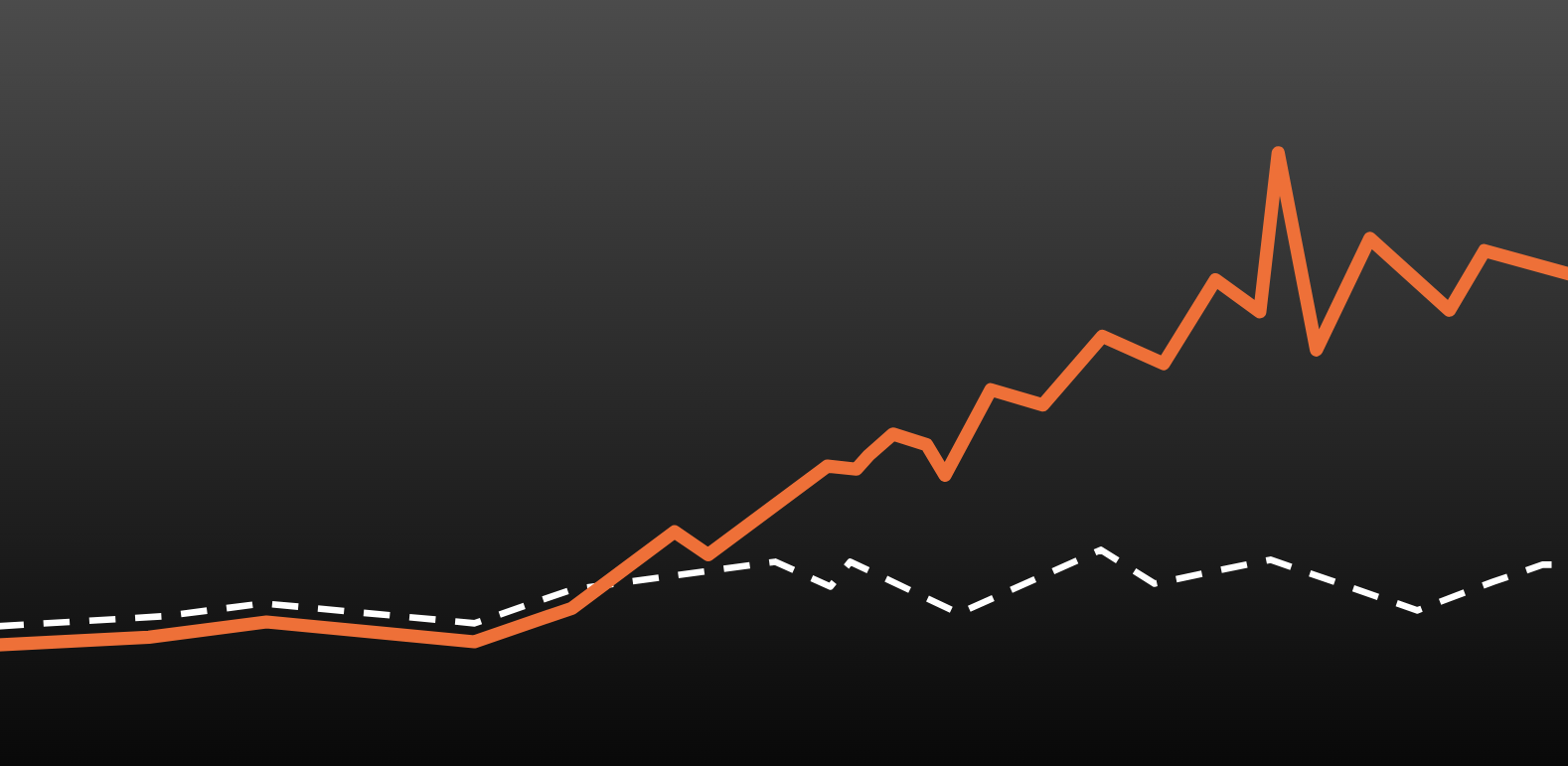

In his tongue-twisting talk “Ten Techtonic Trends,” Wesley ter Haar pointed out that marketing budgets peaked in 2016, only to fall the following year. Despite stagnant and decreasing budgets, the drive for content is always increasing as is the demand to provide more personalized, custom experiences. And if that doesn’t sound difficult enough, the challenge is expounded by an explosion of new forms of media: AR/VR experiences, live video, 360-degree video and more. Those in fear of missing out on the next big media platform might scramble to develop content for each, but this can be difficult to afford and organize. When producing so much content for so many different platforms, it’s easy for everything to feel a bit disconnected. That’s why it’s important to switch up your mentality and framework (find out how below).

Developing assets at scale is one way to satiate the growing hunger for content. It’s a process and creative framework that involves developing hundreds, thousands or maybe billions of unique assets tailored to hyper-specific segments in your market with only a handful of pieces of content. But how can organizations pull it off affordably? The answer lies in changing their way of thinking, which brings us to our next big idea:

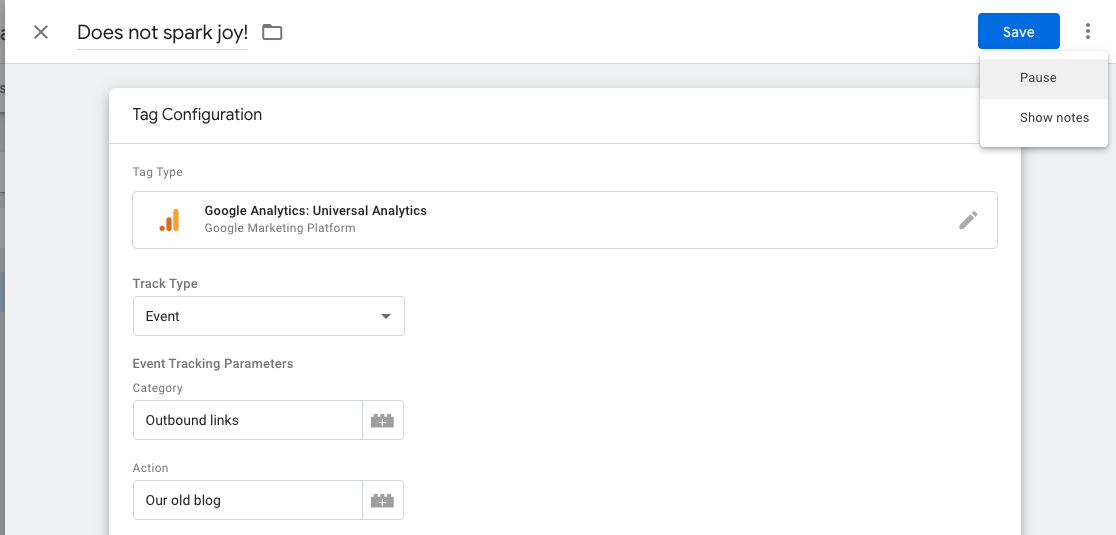

Brands must integrate data and analytics throughout the creative process, not just at the end.

“Data is information, and information is power. What gives you the difference is to interpret it and know how to use it … and use it as a basis to create something.” –Eva Santos

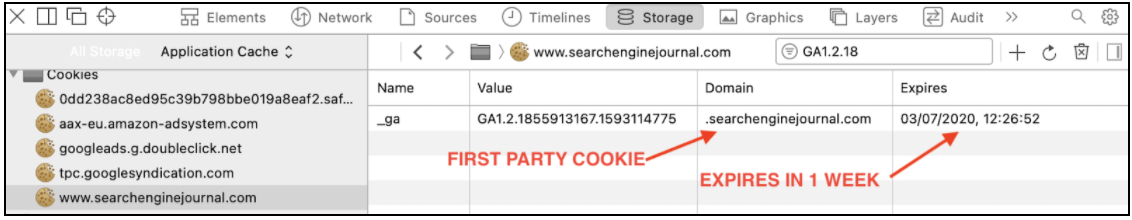

Santos touched upon a key idea from FIAP: that relegating data and analytics to the end of the creative cycle is obsolete. Instead, agencies must incorporate data into every step of the creative process. Sir Martin Sorrell had a similar message when he said: “Data will inform creative and it will inform media planning. It will make them better, it won’t make them worse.”

Data will inform creative and it will inform media planning. It will make them better, not worse.

Sir Martin Sorrell used the example of Netflix recommendations, which surface up customized posters and dynamic trailers tailored to audiences’ unique preferences. It’s easy to see how this makes content compelling, but it’s also a smart and economical way to generate tons of assets with relatively few pieces to begin with: with just 115 scenes and 3 intro animations, you could make almost 1.5 million pieces of content.

But this requires a bit of rethinking your strategy, too. Assets at scale rely on content frameworks punctuated with dynamic variables. So rather than developing a different piece of content per segment or interest, you simply develop a framework which you can use to test, scale and create more content without an added cost.

Demographics are dead; long live preferences.

“Demographic information doesn’t give us anything, it’s all about the users’ preferences.” – Wesley ter Haar

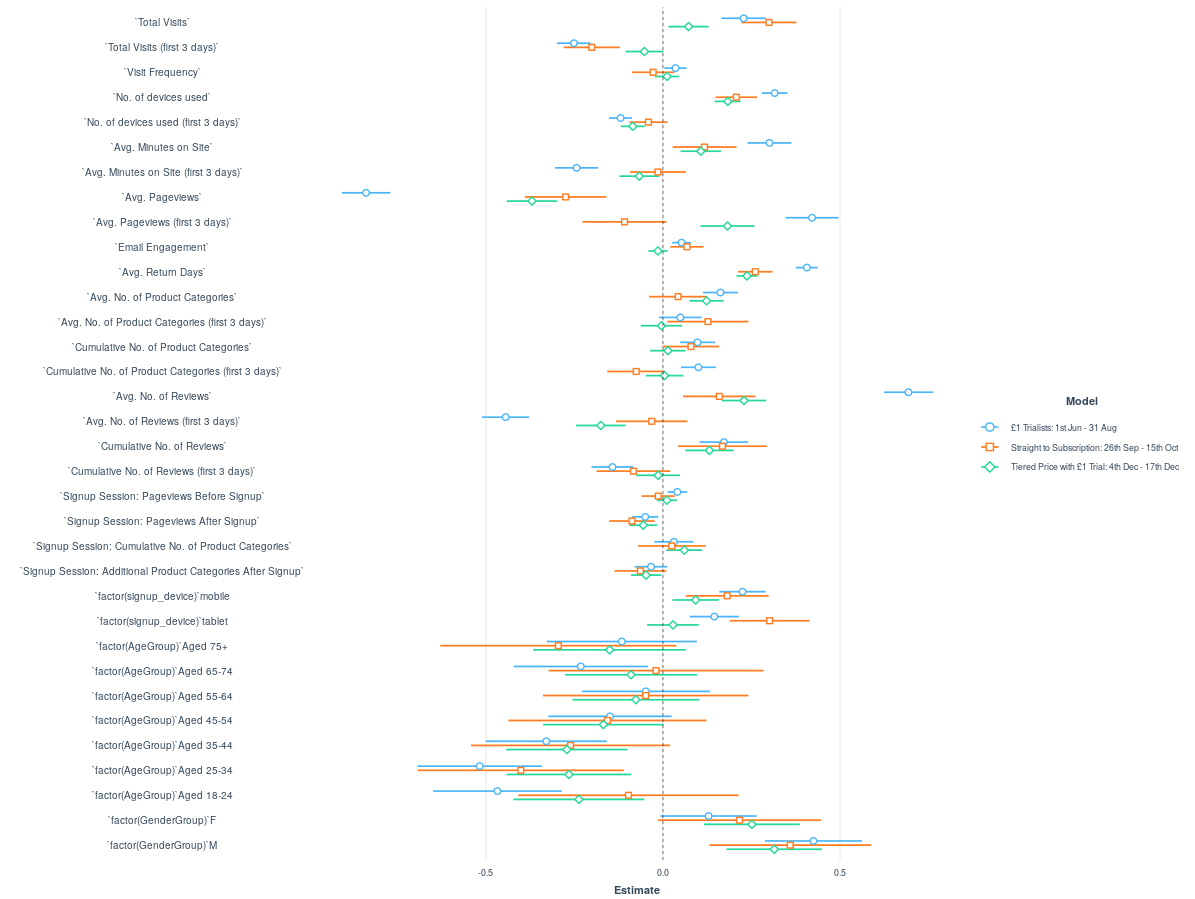

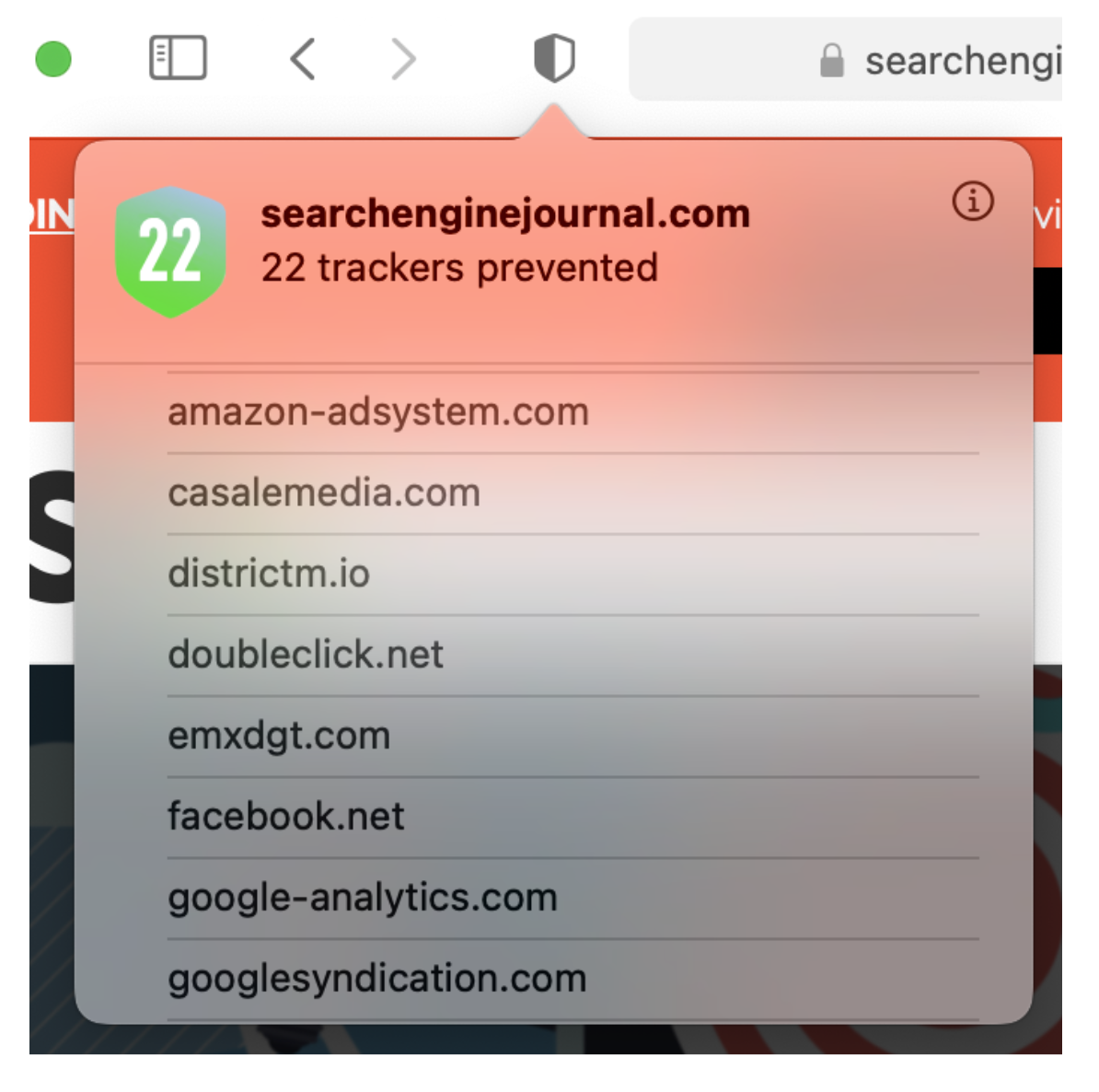

Bad news: demographics like geography, age or gender are dead. Good news: with today’s technology, delivering on user preferences is king. Ter Haar elaborates on the idea in his talk by giving us an example. Consider a young girl on the west coast who loves Breaking Bad. She has much more taste in common with other fans of the show—like an older man in Kentucky who’s also obsessed with it—than with other girls in her community who hate the show.

The next big battleground is personalization and context, which means both new and legacy brands will need to revise their data strategies to stay on top. And the stakes are high: Adobe predicts that $800 billion will go to the top 15% of companies alone who get the formula right. That makes now more important than ever to get an effective data strategy in place.

FIAP FIAP2018 Sir Martin Sorrell S4 Capital Wesley ter Haar data creative content programmatic content data analytics data is an opportunity