Transitioning Voice Bots from ‘Book Smart’ to ‘Street Smart’

Transitioning Voice Bots from ‘Book Smart’ to ‘Street Smart’

Interest has grown significantly in voice platforms over the years, and while they have proved life-changing for the visually impaired or those with limited mobility, for many of us the technology’s primary convenience is in saving us the effort of reaching for a phone. Yet we anticipate a future in which voice platforms can provide more natural experiences to users beyond calling up quick bits of information. This ambition has prompted us to look for new ways to provide added value to conversations, making smart use of the tools readily available by organizations leading the charge in consumer-facing voice assistant platforms.

The primary challenge in unlocking truly human-like exchanges with virtual assistants is that their dialogue models are best fit for transactional exchanges: you say something, the assistant responds with a prompt for another response, and so on. But we’ve found that brands that are keen on taking advantage of the platform are looking for a more than a rigid experience. “There are plenty of requests from clients about assistants, who are under the impression that the user can say whatever,” says Sander van der Vegte, Head of MediaMonks Labs. “What you expect from a human assistant is to speak open-ended and get a response, so it’s natural to assume a digital assistant will react similarly.” But this conversation structure goes against the grain for how these platforms typically work, which means we must find new approaches that better accommodate the experiences that brands seek to provide their users.

Giving Digital Assistants the Human Touch

One way to make conversations with voice assistants more human-like is to empower them with a distinctly human trait: emotional intelligence. MediaMonks Labs is experimenting with this by developing a Google Assistant action that serves as a marriage counselor that uses sentiment analysis to draw out the intent and meaning behind user statements.

This is the first step down an ongoing path for deeper, richer conversation.

“If the assistant moves in this direction, you’ll get a far more valuable user experience,” says van der Vegte. One example of how emotional intelligence can better support the user outside of a counseling context would be if the user asks for directions somewhere in a way that indicates they’re stressed. Realizing that a stressed user who’s in a hurry probably doesn’t want to spend time wrangling with route options, the action could make the choice to provide the fastest route.

As assistants become better equipped to listen and respond with emotional intelligence, their capabilities will expand to provide better and more engaging user experiences. In a best-case scenario, an assistant might identify user sentiment and use that knowledge to recommend a relevant service, like prompting a tired-sounding user to take a rest. Such an advancement would allow brands to forge a deeper connection to users by providing the right service at the right place in time. While Westworld-level AI is still far off in the distance, we’ll continue chatting and tinkering away at teaching our own bots the fine art of conversation—and we can’t wait to see what they’ll say next.

We can learn to speak more effectively to an AI, just like how AI learns to speak to us.

To better understand what this looks like, consider how two humans effectively resolve a conflict. Rather than accuse someone of acting a certain way, for example, it’s preferable to use “I messages” about how others’ actions make you feel, so the other party doesn’t feel attacked. So whether you begin a statement with “you” (accusatory) or “I” (garnering empathy) can have a profound impact on how others invested in a conflict will respond. Likewise, our marriage counseling action analyzes the vocabulary and inflection in two users’ statements to dole out relationship advice to them. Responses are focused not just on what they say but how they say it.

“We can learn to speak more effectively to an AI, just like how AI learns to speak to us,” says Joe Mango, Creative Technologist at MediaMonks. According to him, users have been conditioned to speak to bots in, well, robotic ways through their experience with them. “When we had someone from our team test the action by simply speaking to it, he wasn’t sure what to say at first.”

Speaking a New Language

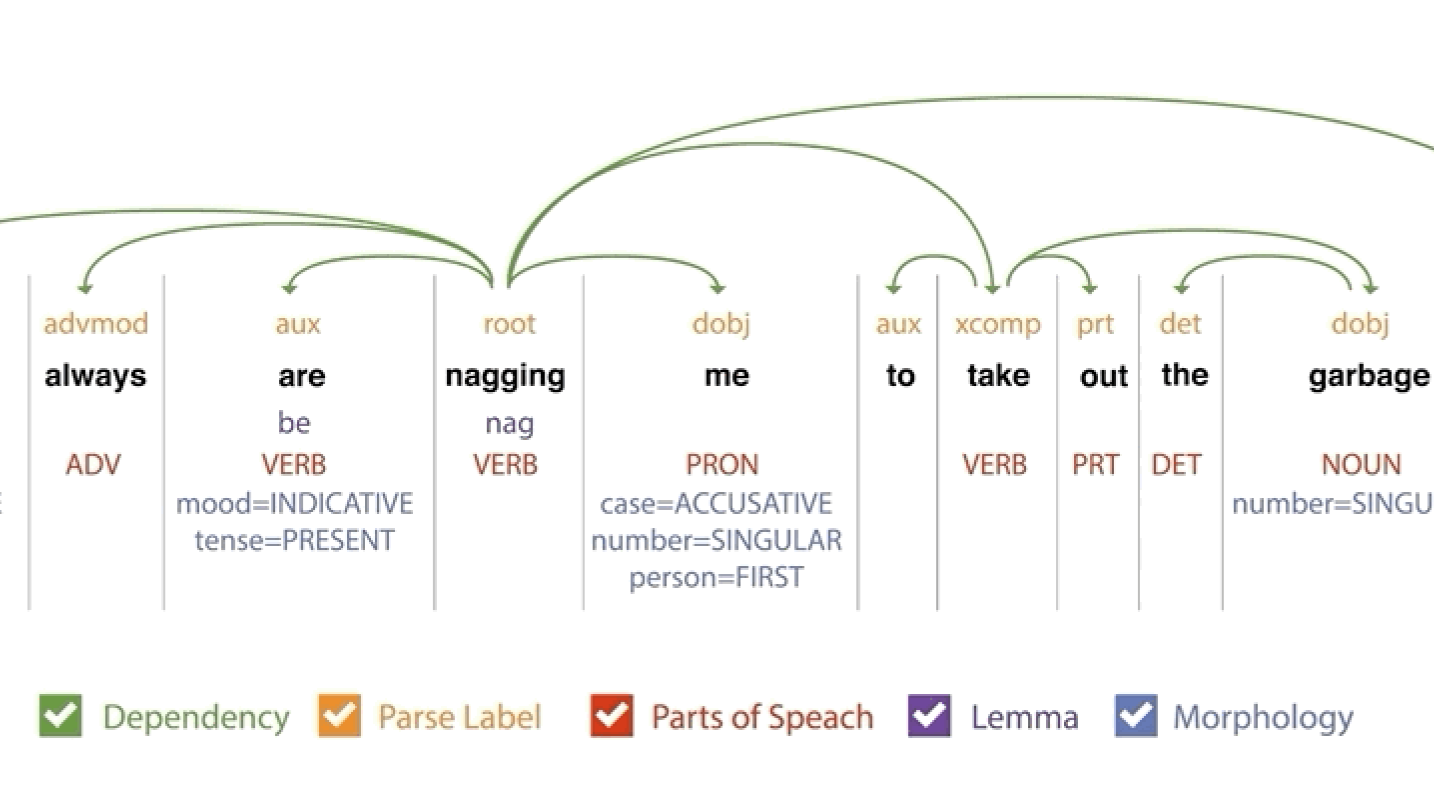

The action takes a large departure from the standard conversational setup with a voice bot. Rather than have a back-and-forth chat with a single user, the action listens attentively as two users speak to one another. Allowing Google Assistant to pull off such a feat gets at the heart of why so few actions provide such rich conversational experiences: the inherent limitations of the natural language processing platforms that power them. For example, the Google Assistant breaks conversation down into a “you say this, I say that”-style structure that limits the amount of time it opens the microphone to listen to a user response.

We always want to push the limitation of the frameworks to provide new experiences and added value.

Conventional wisdom surrounding conversational design shies away from “wide-focus” questions, encouraging developers to be as pointed and specific as possible so users can answer in just a word or two. But we think breaking out of this structure is not only feasible, but capable of providing the next big step in richer, more genuine interactions between people and brands. “It speaks a lot to the mission of what we do at Labs,” said Mango. “We always want to push the limitation of the frameworks out there to provide for new experiences with added value.”

What does such an interaction look like? When a couple tested the marriage counselor action, one user mentioned his relationship with his brothers: some of them were close, but the user felt that he was becoming distant from one of them. In response, the assistant chimed in to remind the user that it was good that he had a series of close relationships to confide in. Its ability to provide a healthy perspective in response to a one-off comment—a comment not even about the user’s romantic relationship, but still relevant to his emotional well-being—was surprising.

Next Stop: More Proactive, Responsive Assistants

While the action is effective, “It’s just the first step down an ongoing path to support more dynamic sentence structures and deeper, richer conversation,” says Mango. While the focus right now is on inflection and vocabulary, future iterations of the action could draw on users’ tone of voice to glean their sentiment even more accurately. From there, findings from this experiment aid in providing other voice apps a level of emotional intelligence that helps organizations engage with their audience in even more human-like ways.

Google Assistant Alexa skills Google actions sentiment analysis emotional intelligence AI artificial intelligence conversational interface