Be everywhere at once—at least virtually.

If you ever fantasized about cloning yourself to be able to comply with all your commitments or complete your pending tasks, metahumans may be just what you were looking for. Virtually, at least. As digital representatives of existing individuals, metahumans offer endless possibilities in terms of content creation, customer service, film and entertainment at large. Sure, they won’t be able to do your dishes—at least not yet—but if you happen to be a public figure or work with them, it’s a game changer.

By lending likeness rights to their digital doubles, any influencer, celebrity, politician or sports superstar will be able to make simultaneous (digital) appearances and take on more commercial gigs without having to be on set. As John Paite, Chief Creative Officer of Media.Monks India, explains, “Celebrities could use their metahuman for social media posts or smaller advertising tasks that they usually wouldn’t have the availability for.” Similarly, brands collaborating with influencers and celebrities will no longer need to work around their busy schedules.

The truth is, virtual influencers are already a thing—albeit in the shape of fictional characters rather than digital doubles of existing humans. They form communities, partner with brands and are able to engage directly and simultaneously with millions of fans. Furthermore, they are not stuck in one place at a time nor do they operate under timezone constraints. In that regard, celebrities’ digital doubles combine the benefits of virtual humans with the appeal of a real person.

A new frontier of personalization and localization.

Because working with virtual humans can be more time-efficient than working with real humans, they offer valuable opportunities in terms of personalization and localization. Similarly to how we’ve been using Unreal Engine to deliver relevant creative at speed and scale, MetaHuman Creator takes localization to a new level. As Senior Designer Rika Guite says, “If a commercial features someone who is a celebrity in a specific region, for example, this technology makes it easy for the brand to replace them with someone who is better known in a different market, without having to return to set.”

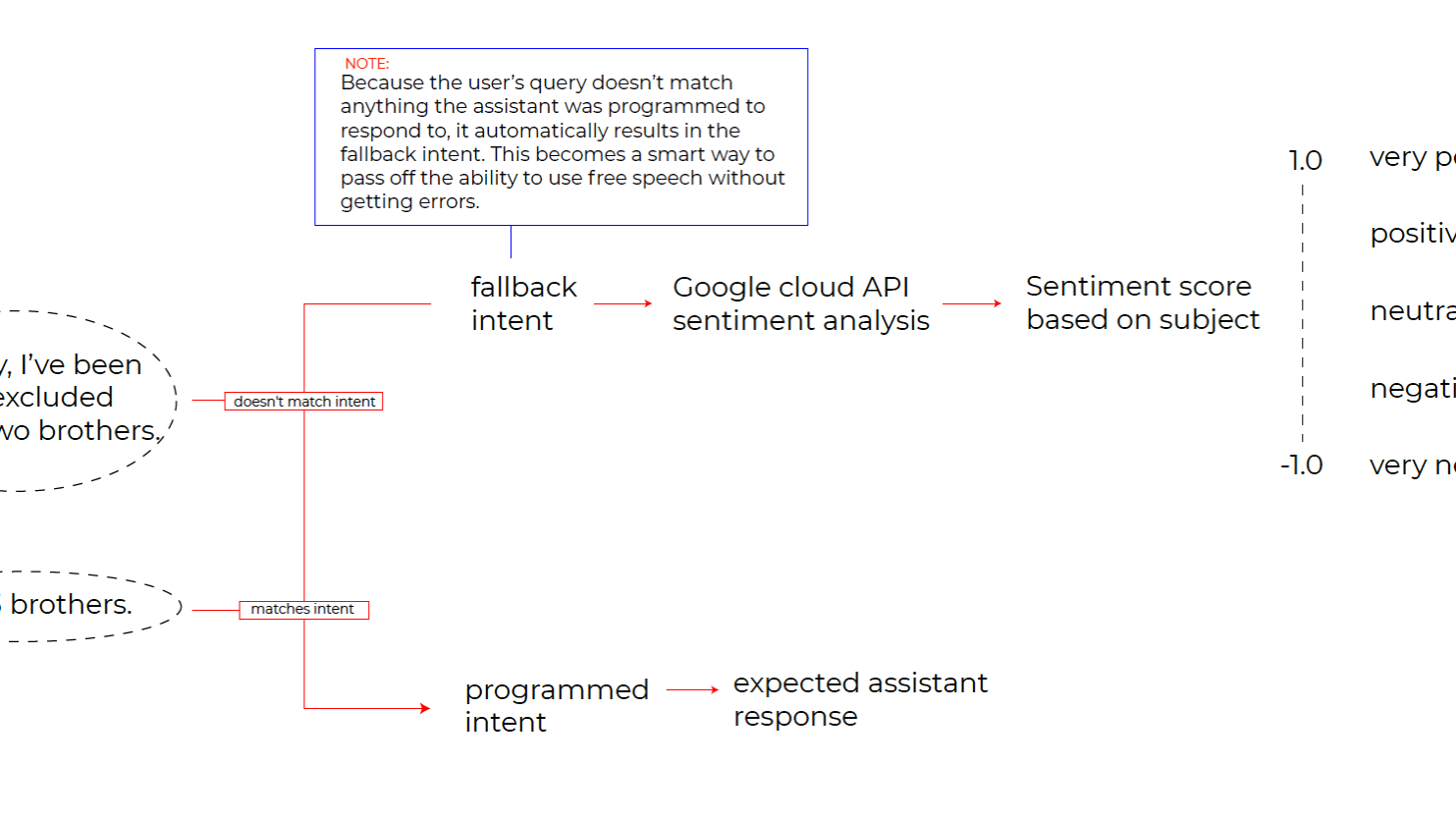

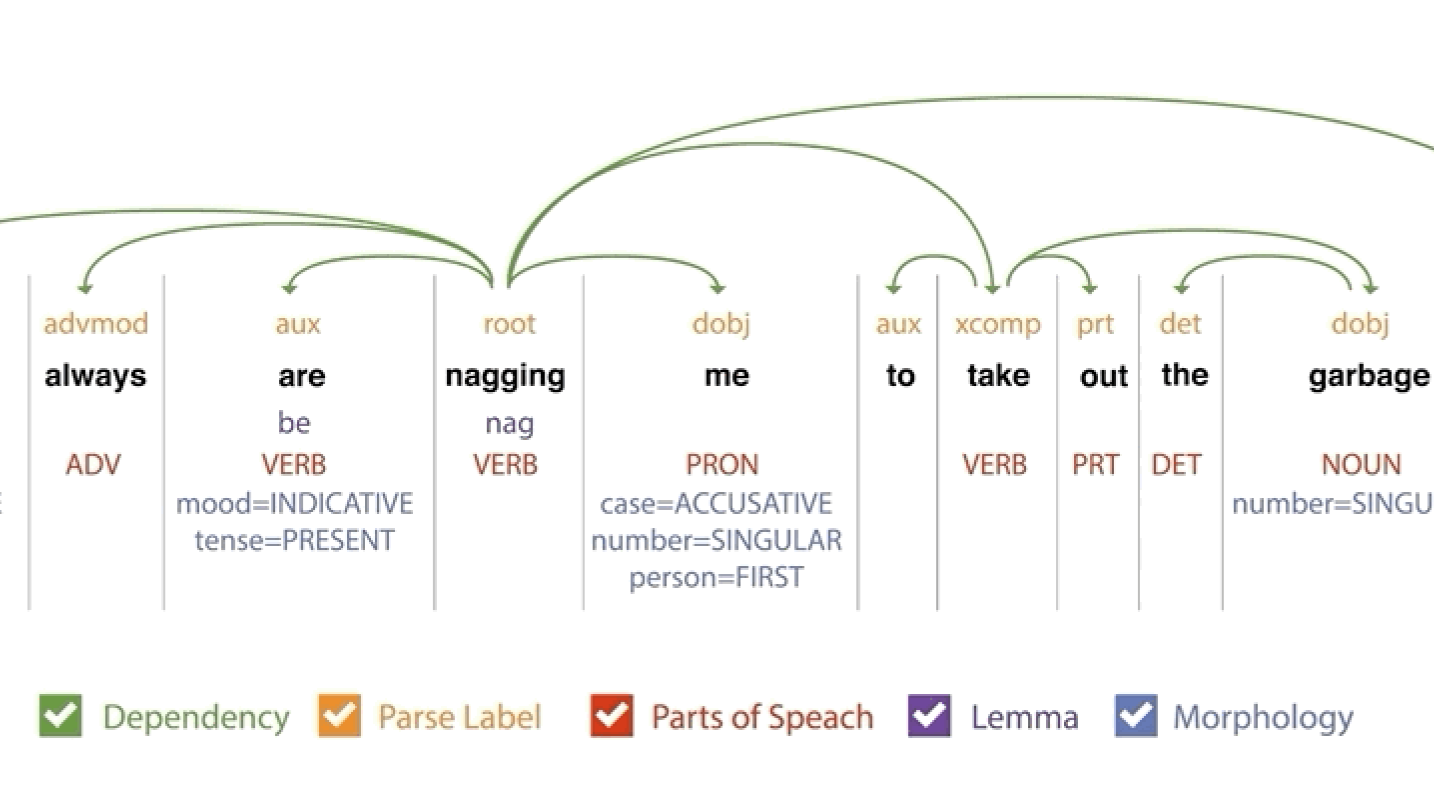

But not everything is about celebrities. Metahumans are poised to transform the educational landscape, too, as well as many others. “If you combine metahumans with AI, it becomes a powerhouse,” says Paite. “Soon enough, metahumans will be teaching personalized courses, and students will be able to access those at a lower price. We haven’t reached that level yet, but we’ll get there.”

For impeccable realism, the human touch is key.

To test how far metahumans are ready to go, our team scanned our APAC Chief Executive Officer, Michel de Rijk, using photogrammetry with Epic Games’ Reality Capture. This technique works with multiple photographs from different angles, lighting conditions and vantage points to truly capture the depth of each subject and build the base for a realistic metahuman mode. Then, we imported the geometry into MetaHuman Creator, which our 3D designers refined using the platform’s editing tools.

“Because Mesh to Metahuman allows you to scan and import your real face, it’s much easier to create digital doubles of real people,” says our Unreal Engine Generalist Nida Arshia. That said, the input of an expert is still necessary to attain top-quality models. “Certain parts of the face, such as the mouth, can be more challenging. Some face structures are harder than others, too. If you want the metahuman to look truly realistic, it’s important to spend some time refining it.”

Once we got our prototype as close to perfection as possible, we used FaceWare’s facial motion capture technology to unlock real-time facial animations. While FaceWare’s breadth of customization options made it our tool of choice for this particular model, different options are available depending on the budget, timeline and part of the body you want to animate. Unreal’s LiveLink, for example, offers a free version that allows you to use your phone and is easy to implement both real-time and pre-recorded applications, but focuses on facial animations only. Mocap suits with external cameras allow for full-body motion capture, but with mid-fidelity, and recording a real human in a dedicated mocap studio unlocks highly realistic animations for both face and body.

At the same time, the environment we intend the metahuman to inhabit is worth considering, as the clothes, hair, body type and facial structure will all need to fit accordingly. Naturally, different software may adapt better to one style or another.

While this technology is still incipient and requires some level of expertise, brands can begin to explore different ways to leverage metahumans and save time, money and resources in their content creation, customer service and entertainment efforts. Similarly, creators can start sharpening their skills and co-create alongside brands to expand the realm of possibilities. As Arshia says, “We must continue to push forward in our pursuit of realism by focusing on expanding the variety of skin tones, skin textures and features available so that we can build a future where everyone can be accurately represented.”