A Modeler’s View on Google's Meridian MMM Platform

A Modeler’s View on Google's Meridian MMM Platform

As a leading marketing transformation consultancy at the forefront of marketing analytics, we have taken a deep look into Google's latest offering: Meridian, their new Market Mix Modeling (MMM) tool.

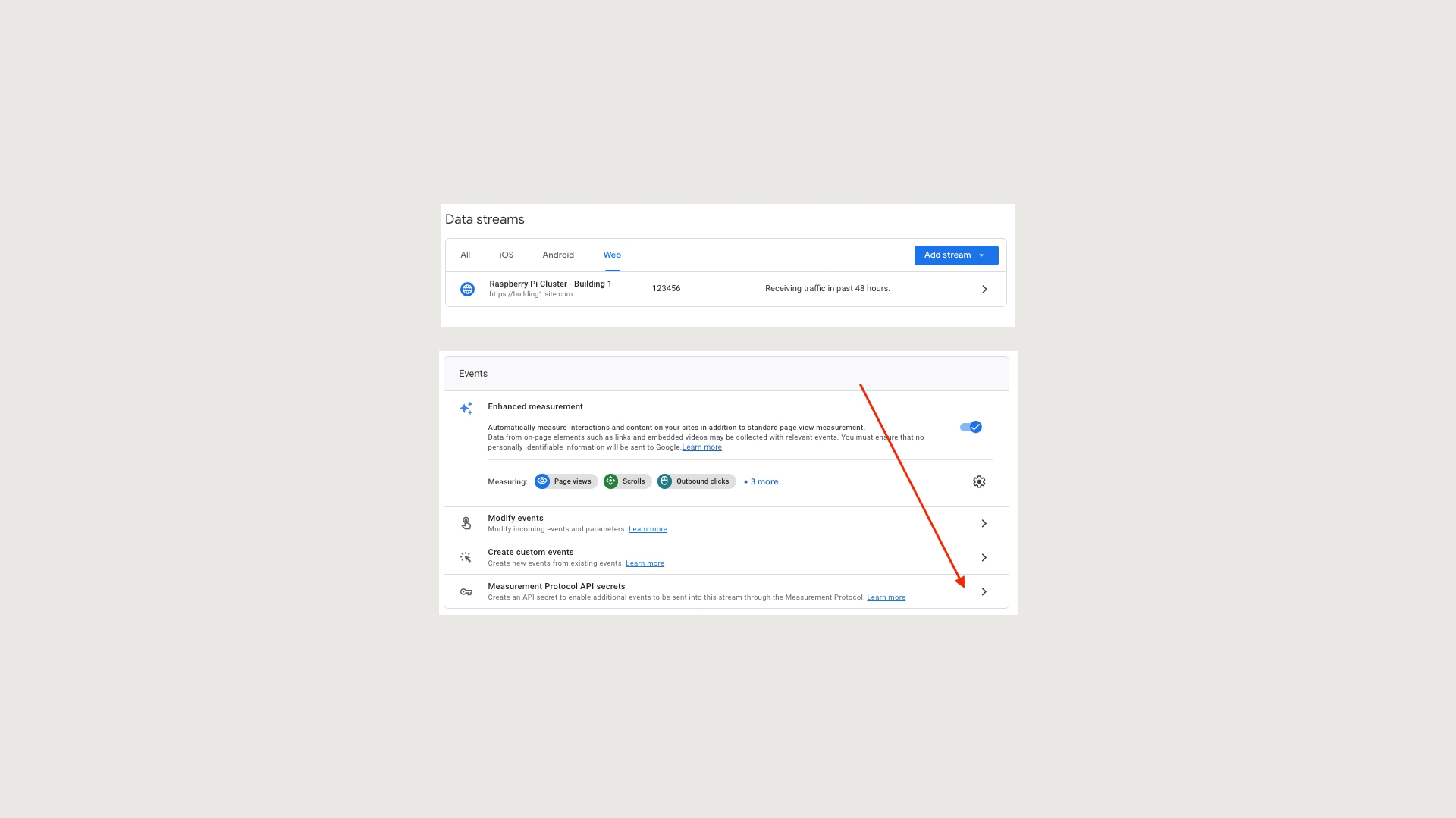

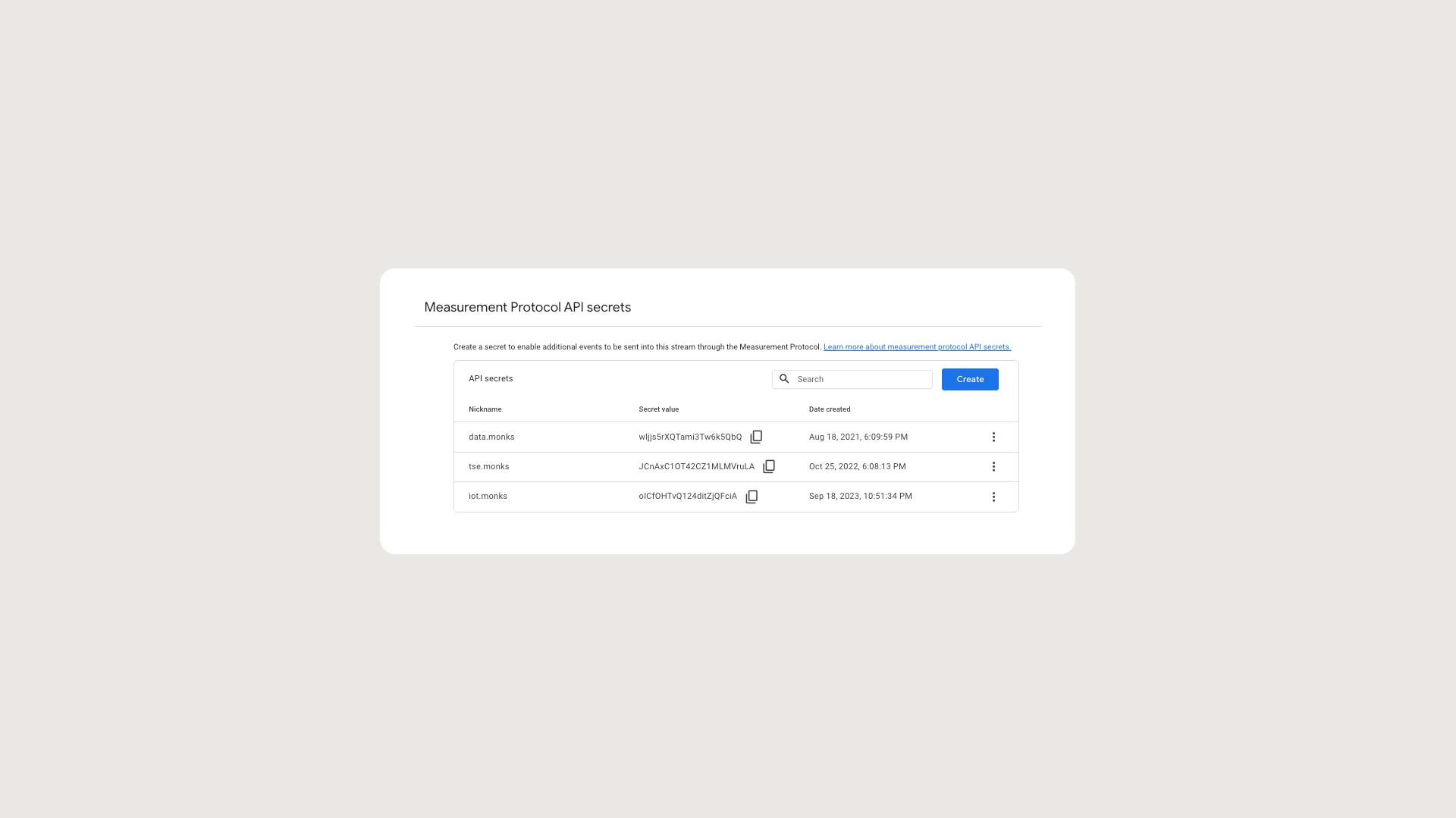

Google's Meridian is built upon the foundation of the previously released RBA/LMMM materials. The developments include geo experiments to ingest into the modeling, as well as detail on reach for YouTube. The emphasis on triangulation via A/B testing to enhance MMM accuracy is a strategy we are well-versed in ourselves and offers a good base to start from. However, it is crucial to note that while Meridian provides a step forward in measurement, it remains just a tool—a sophisticated one that requires expert hands to wield effectively.

At Media.Monks, we pride ourselves on our robust internal platform that is industry-leading in terms of speed and functionality. Meridian gives a step up for brands who are just starting off in their MMM journey, helping them move away from last click to better quantify media uplifts.

At the end of the day, a model is only as good as its modeler: you can have the best model in the world, but if it's not fed with accurate, high-quality data or delivered clearly to key stakeholders, it's not going to be trusted (and therefore, adopted) in an organization.

From an experienced modeler’s perspective, these are some of the key points to consider with Meridian:

- The methodology behind Meridian is solid and makes sense around the emphasis on triangulation, which enhances the accuracy of the results.

- However, experienced econometricians will be essential for operating Meridian effectively in-house. Brands must ensure their teams possess the expertise to source the right data, build the models to reflect the real world, and translate data insights into actionable ROIs and response curves, or they risk making flawed decisions from the outputs.

- As with all MMM initiatives, data quality remains a critical factor in whether or not you’re adding value or making accurate decisions. Having accurate and full data across all drivers of sales (media, price, promotions, seasonality, climate, etc.) is critical for MMM. Strong data foundations also gives a significant advantage, whether brands are utilizing Meridian or any other technology.

- Effective communication within organizations is key to driving traction and implementation of MMM strategies, and explaining models clearly and effectively is key for any MMMs success.

- The launch of Meridian represents a shift away from outdated attribution models towards a more accurate, incremental media valuation approach. Even if it isn’t the best-fit tool for all brands, it is another step in the industry’s maturation, especially in the wake of cookie deprecation and changing privacy legislation.

- Smaller clients with simpler data structures, such as ecommerce clients spending less than $2 million USD on digital media, will benefit from this tool as an entry point to the world of MMM.

- Some clients may question running their media measurement on a platform from a media owner

In conclusion, Google's Meridian offers a solid starting point for less complex brands looking to enhance their measurement capabilities via a framework. Increasing the usage of MMM can only be good for the industry as a trusted tool to measure and optimize media. That being said, hard work is still needed in attracting econometric talent into the marketing world to maintain model accuracy and increase adoption of these methodologies. At the end of the day, a model is only as good as its modeler: you can have the best model in the world, but if it's not fed with accurate, high-quality data or delivered clearly to key stakeholders, it's not going to be trusted (and therefore, adopted) in an organization.

A good step forward, but still more to do on the talent front. See our post on apprenticeships to learn what we are doing to address this.

For more information on how we can help with your marketing effectiveness measurement or Market Mix Modelling, visit our Measurement page or contact us.