Search Generative Experience and Its Potential Impacts on Content and SEO

Search Generative Experience and Its Potential Impacts on Content and SEO

In May 2023, Google announced the introduction of a new search experience that’s primarily based on the use of generative AI to adapt to new search behaviors. In Google’s own words, it’s a way to “unlock entirely new types of questions you never thought Search could answer, and transform the way information is organized, to help you sort through and make sense of what’s out there.”

Search Generative Experience (SGE), or “generative Search Engine Results Pages (SERPs)” as I call it, serves as a facilitator for those seeking to find information on the web with greater speed. Instead of relying solely on keyword-based searches, you could pose complete questions and even follow up with additional inquiries, mimicking the conversational style of interacting with a language model-based chatbot.

However, since its launch, numerous discussions have arisen regarding this innovation—its advantages, disadvantages and potential limitations. For content marketing and SEO professionals, the question remains: what does this feature mean for our work?

What are the main changes we’ll see in the SERP?

Based on the various featured snippets and enhanced results currently available, it’s evident that the SGE will indeed greatly enhance users’ access to information. And if you are thinking, “I need a quick answer to a question, and I want it in an easily accessible place that allows me to navigate through complementary pages to delve deeper,” then Google will remain unrivaled.

It’s like getting all you need in one search. And it makes sense, right? After all, Google held a global market share of 90.6% in June 2023, as reported by Similar Web. Additionally, according to Semrush's The State of Search, about one in five searches resulted in a click on the first search result. These are significant numbers, and when we talk about clicks in top positions, we are also referring to visits to sites that have a user-first mindset, promote quality content creation, respect the best practices of EEAT evaluations, conform to Google’s Helpful Content system and contribute to user engagement on the channel.

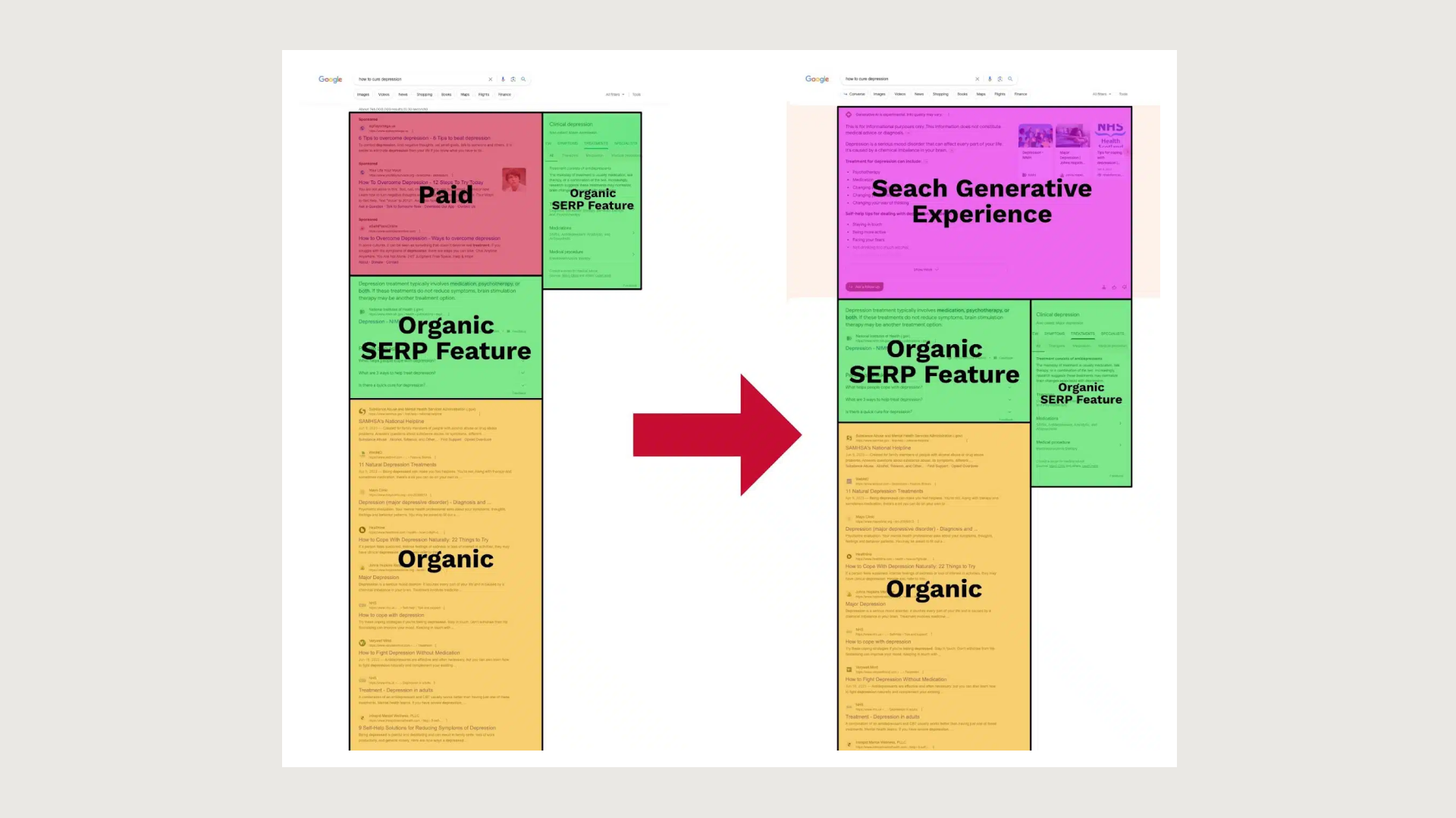

In terms of the interface, the biggest change brought about by SGE is at the top section of the search results page. Essentially, generative answers replace the traditional list of paid URLs, providing users with a more immersive and semantic experience.

The image below provides a clearer illustration—although it’s worth noting there are various result variations depending on the type of search, which I’ll explore below.

Source: Search Engine Land

Let’s look at some other examples I found.

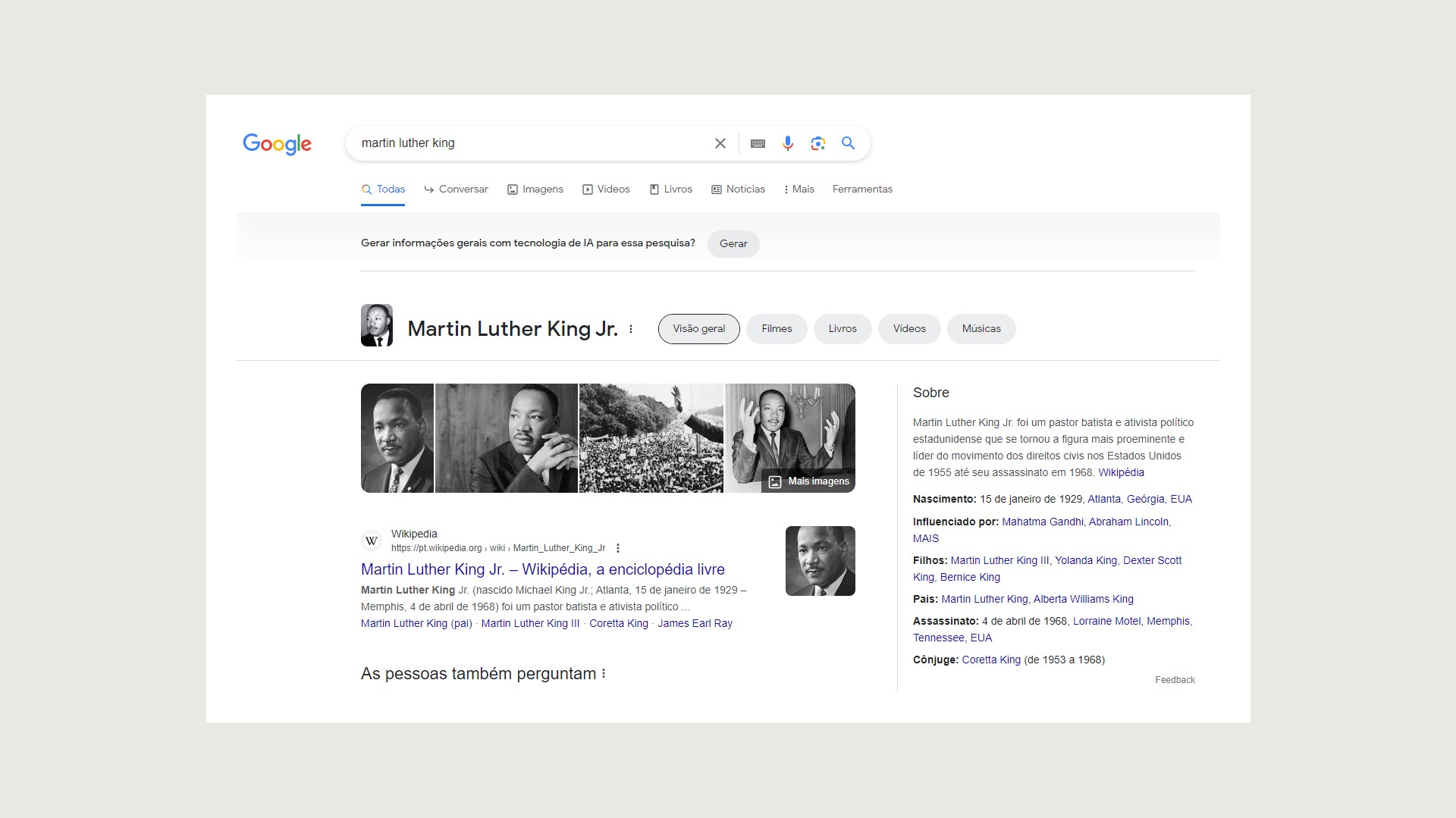

1. Informational search for a public figure

The Knowledge Panel upon searching for Martin Luther King Jr. (In Portuguese)

When searching for a public figure, the Knowledge Panel still appears, and the SGE asks if you want to generate something from it. As the Knowledge Panel typically offers comprehensive information about public figures or brands, as long as the information on the corresponding Wikipedia page is reliable, SGE seems to recognize that it may not be necessary to generate or synthesize further information.

2. Transactional search

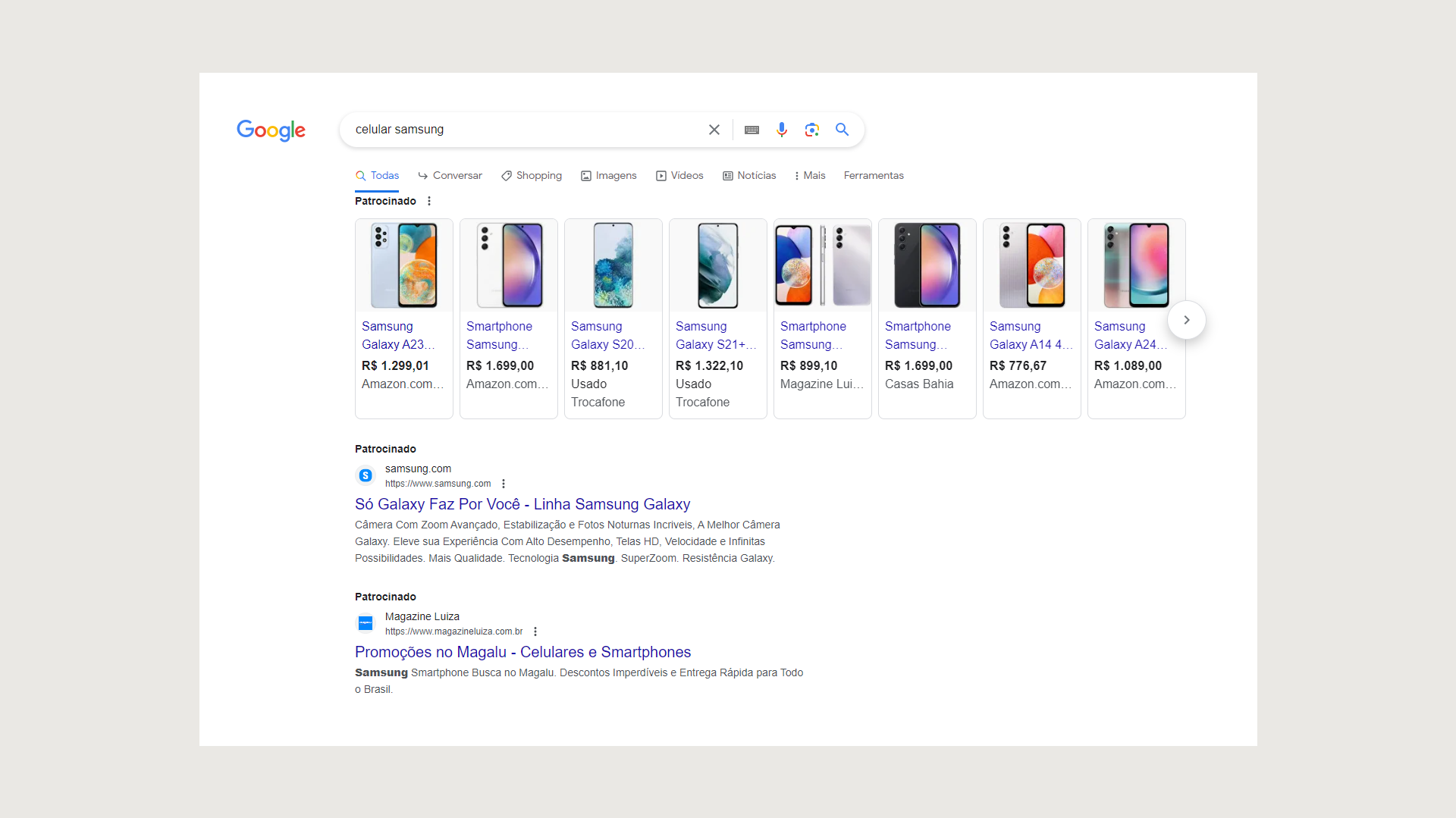

The main results page after searching for Samsung smartphones.

In the case of a transactional intent search, the SERP is predominantly taken over by ads. Upon reaching the end of the first scroll, SGE once again prompts you to generate information but doesn’t do it automatically. Since the search query involves a transactional keyword rather than an informative/transactional or commercial one (such as “best phones to buy on Black Friday”), SGE appears to comprehend that the user intends to view prices directly. For that, the Shopping results provided are deemed sufficient.

3. Geolocated search

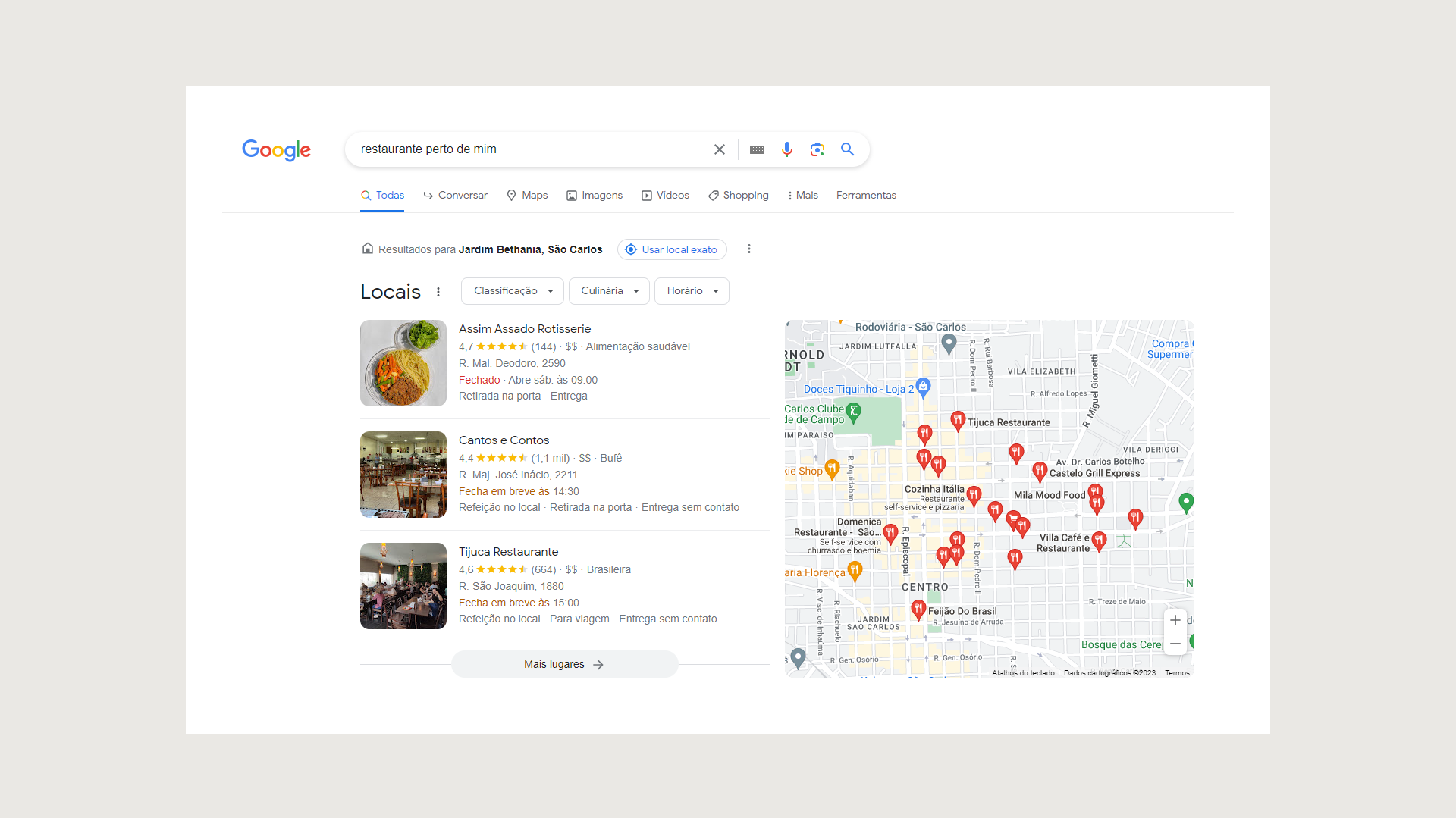

The results page when searching for restaurants in a specific location.

In this example of a geolocated search, SGE does not appear in the first tab, and organic results are not altered in any way. This is probably based on the same reasoning behind the previous example.

4. Informational search about health

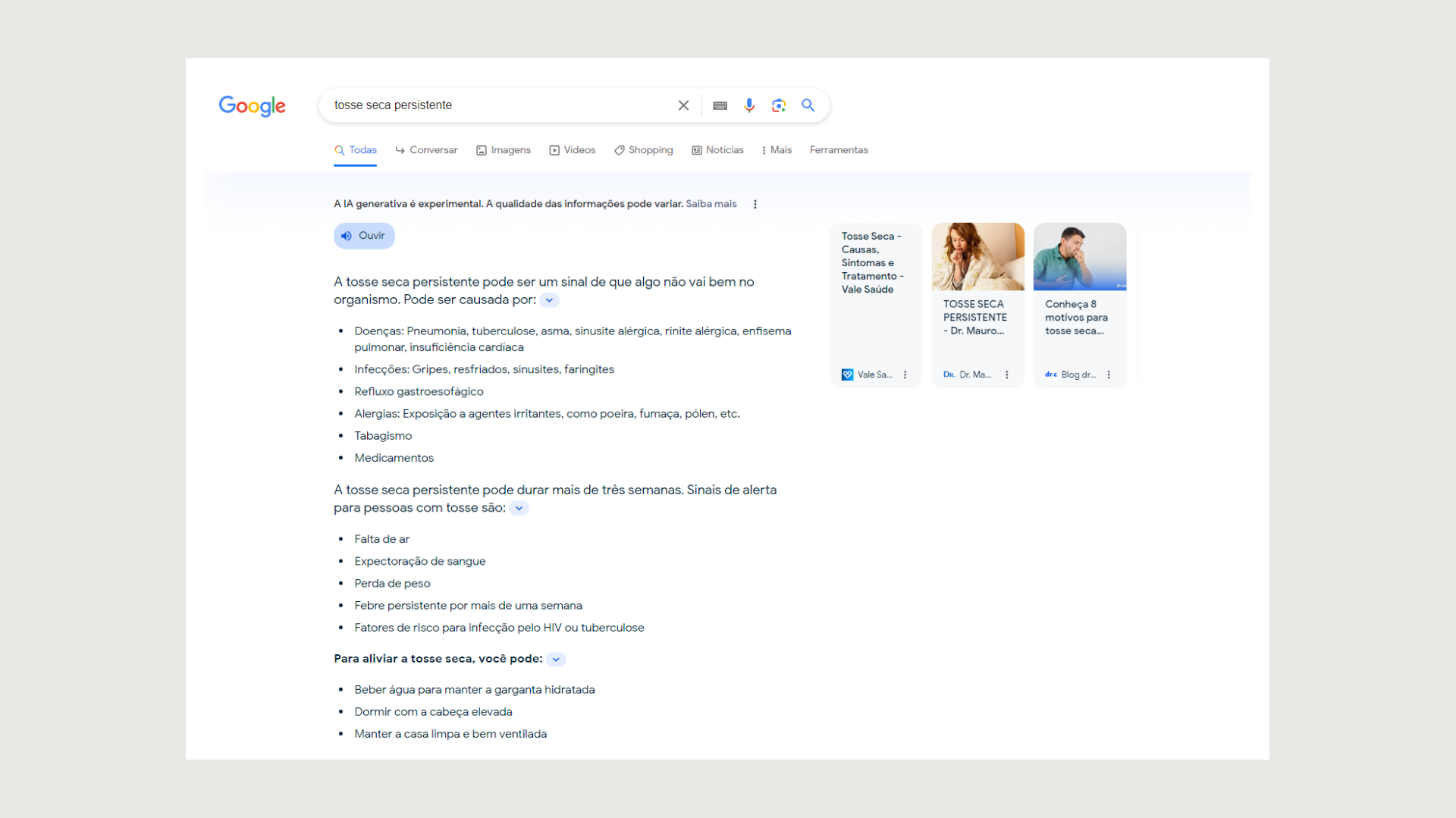

The results page when googling health-related information.

In the case of an informative intent search, SGE generates a comprehensive answer while displaying the first three organic results as snippets on the side. This format allows users who are seeking detailed information to access a full article. This is one of the primary advantages that I envision in the Search Generative Experience scenario: users who visit blogs or similar platforms will likely be more qualified and engaged with the content they choose to explore.

5. Informational search about finance

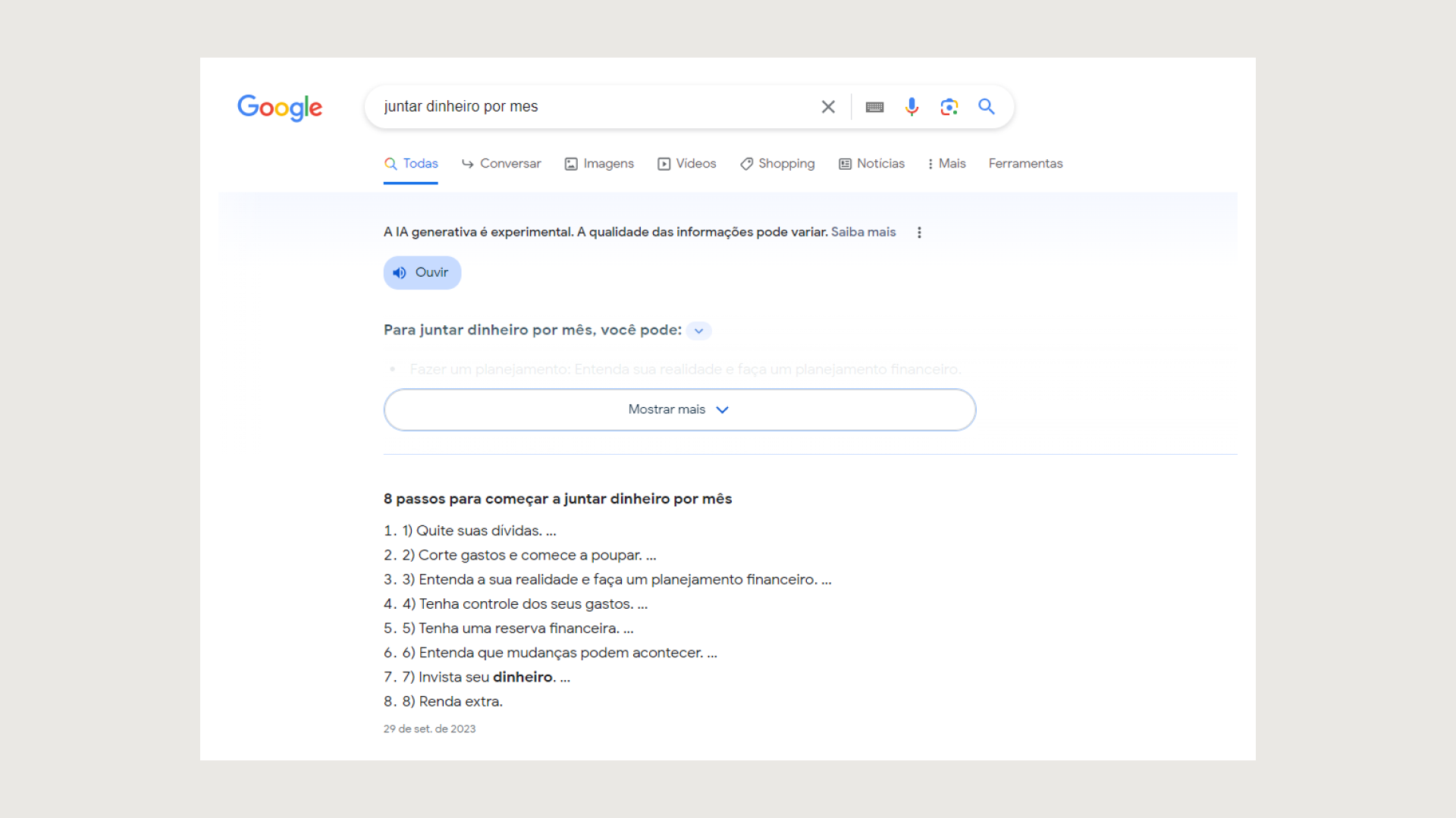

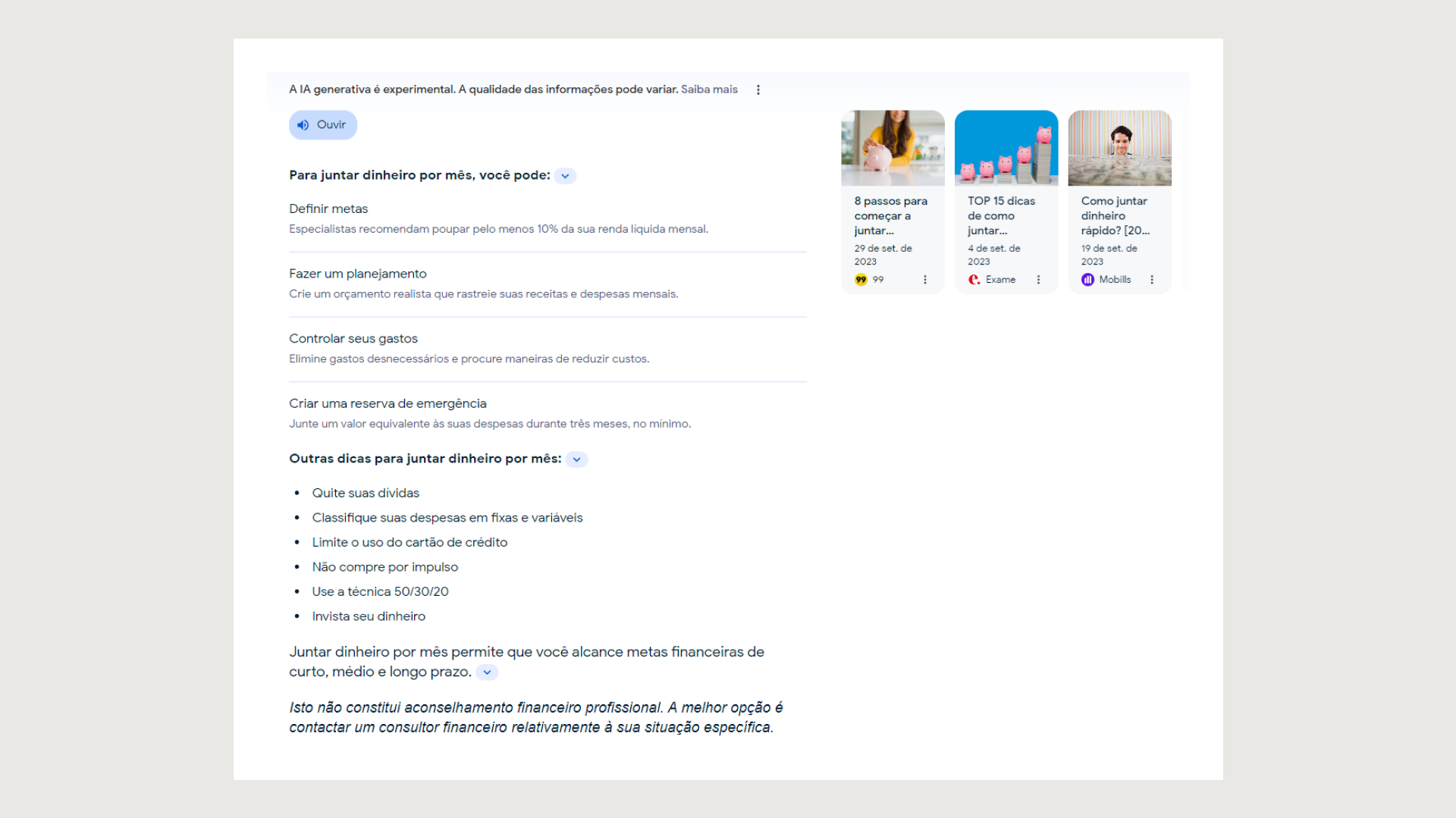

The results page when googling "save money every month." The first thing that comes up is a line that reads, "Generative AI is experimental. The quality of information may vary. Find out more."

In another search involving a hybrid intent, encompassing both informative and commercial aspects, SGE is displayed in a condensed manner, while a list of Featured Snippets offers more comprehensive results just below. However, due to space limitations, SGE requires users to click on “See more” to access the complete information. Upon expanding the view, SGE provides somewhat more generalized tips compared to the Featured results—image below.

Upon clicking on "Know more," the page expands and features a list of tips on how to save money and their respective sources.

Informational search about games

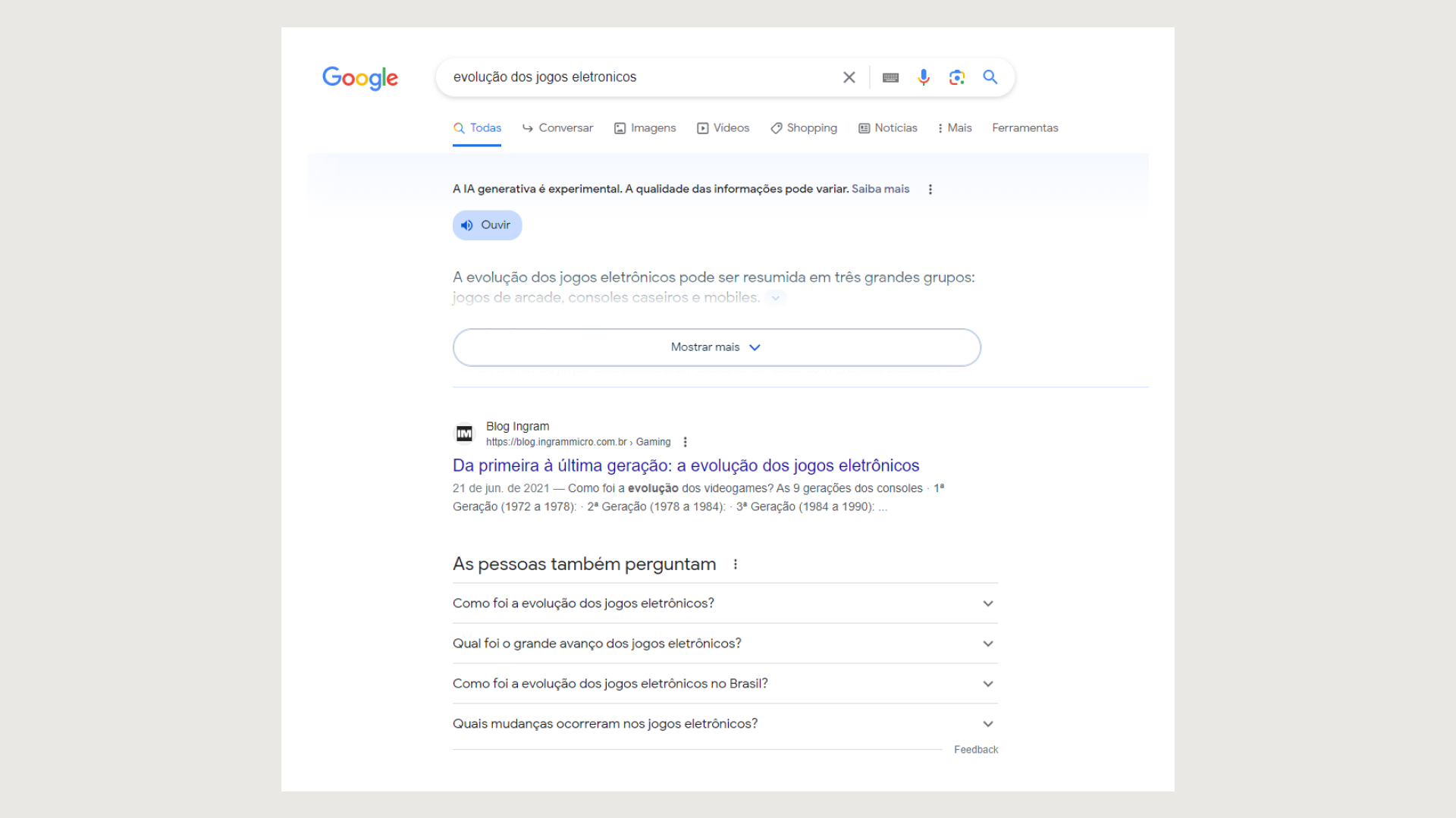

The results page when googling "the evolution of videogames." The first thing that comes up is a line that reads, "Generative AI is experimental. The quality of information may vary. Find out more."

In a purely informative intent search, SGE once again appears in a condensed form, with the Ingram blog ranking as the top organic result. Additionally, a “People Also Ask” box is visible below. For SEO professionals, it is important to note that, according to data from Insight Partners, 57% of the result links mentioned by SGE are derived from the entire first page of organic results. This means that if your brand appears on that page, there is a significant likelihood that it will be referenced by the AI and consequently maintain high visibility.

How can brands prepare for SGE and succeed in this new landscape?

According to the information we have so far, the SERP will change. However, this is not the first time we’ve had to get used to changes, is it? Like other times, we are always preparing for future Google alterations. The extent to which this poses a challenge for brands depends on the quality and focus they put on SEO and content marketing.

SGE doesn’t change the core principle of SEO, which is to ensure visibility and relevance. It is important, therefore, that we see it as a new era for brands who want qualified organic visibility and are already working hard for it. It will still be very necessary to:

- Continue evolving and seeking better practices for organic ranking, focusing on content that prioritizes being user-first and responding to search intent.

- Increase care during article production, looking at expertise in the segment, optimizing features, and different ways to stand out in SGE.

- Ensure continuous and in-depth understanding of SGE, delving into concepts like Answer Engine Optimization (AEO), for instance, rather than perceiving it as a “villain” in the SEO landscape.

- Continue to adhere to the principle that content is king, and its quality will always be fundamental, with even greater emphasis on sharing authentic research and data, user-generated content (UGC), and utilizing various media formats. Incorporate linguistic inclusion, keywords from related semantic fields, and adapt to hybrid search intent. Additionally, ensure an optimized HTML structure.

To succeed in this new landscape, you have to immerse yourself in it and study it thoroughly. This is precisely what my team and I have been doing, and we recommend anyone interested in the subject to do the same. If your brand has not yet prioritized SEO, content marketing, and the essential work required to attract new users and populate the top of the funnel, now is an excellent time to do so. If needed, look for a team of specialists who can help you diagnose, detect, optimize, measure, and—of course—elevate your website to the top.